A Tech startup is completely relying on the AI tool Claude and is suddenly almost completely at a standstill because access has been blocked. Only hours later is there a solution.

Which startup was denied access? The Argentine financial tech startup Belo used the AI tool Claude from Anthropic as a central part of its daily work. This became a problem when suddenly all accounts were deactivated.

As the CTO Pato Molina of the company shared on X, more than 60 employees simultaneously lost access. He publicly criticized the incident and stated that the blocking occurred without any prior warning.

– Pato Molina via X

Anthropic decided to block our entire organization due to an alleged violation of their terms of use. What specific policy we violated, no idea – we just received an email, and bam, goodbye Claude. If you want to contest the measure, you have to fill out a Google form, as ridiculous as it sounds.

Fragile Dependency

How significantly was the company affected? The impact on the startup was substantial. Since many internal processes ran through the AI, a large part of daily work could no longer be carried out (TimesOfIndia).

For a tech company that heavily relies on efficiency and speed, such a failure means not only delays but also economic damage. The situation also made it clear to the CTO how dependent certain industries have become on external services.

Pato Molinsa emphasizes on X that it is crucial for software companies that depend on AI tools for critical processes not to put all their eggs in one basket.

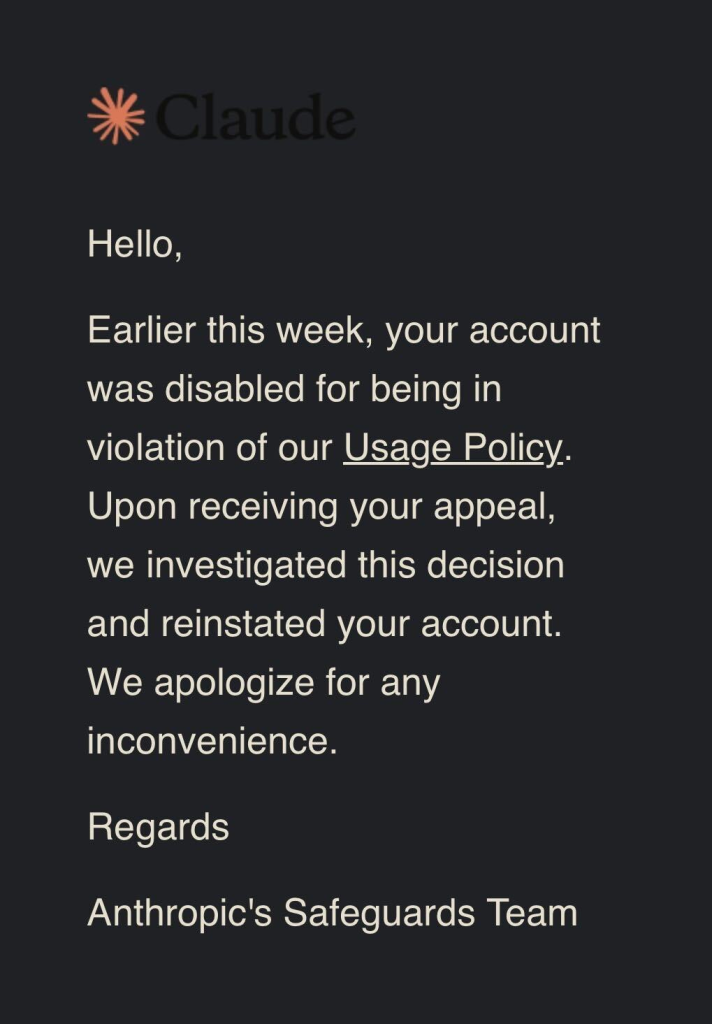

Was there an explanation from Anthropic? Initially, it remained unclear for several hours why the accounts were blocked. Especially frustrating was that there were no prior indications or warnings. Later, Anthropic explained that it was apparently an automated security measure.

Such systems usually intervene as soon as unusual activities are detected. After an internal review, access was finally restored, indicating that no violation of policies had occurred.

If a single service fails, suddenly not only one tool stops working, but sometimes a large part of entire companies does. Certain areas of work rely so heavily on AI solutions that certain dependencies arise. AI can make terrible mistakes, as a recent example shows: A grandmother ended up in jail because criminal detectives trusted an artificial intelligence.

Your opinion is important to us!

Do you like the article? Then let us know!