Anyone who has ever played Counter-Strike: Global Offensive (CS:GO) has certainly noticed the toxic player base. A powerful artificial intelligence is now taking care of that and is quite harsh.

What kind of AI is this? The artificial intelligence is called Minerva and was developed by the online gaming platform FACEIT. The AI is supposed to read and evaluate chat messages in CS:GO directly.

Since August 2019, it has been in use and already shows strong results. Over 7 million messages have been marked as toxic and more than 100,000 players have been warned or banned.

Minerva is harsh and punishes repeat offenders more severely

This is how Minerva operates: During the game, the artificial intelligence monitors the chat history of the players. If it detects a violation, it reports it to the affected player immediately after the match.

Initially, the gamer is cautioned and receives a warning. If it is a repeat offender, they will be banned and will not be allowed to play anymore.

How successful is the program? According to FACEIT, Minerva is extremely successful. The program has already warned 90,000 players and issued 20,000 bans. That is quite a lot, but fits with the statement of a study from this summer. In that study, CS:GO was among the most toxic online games.

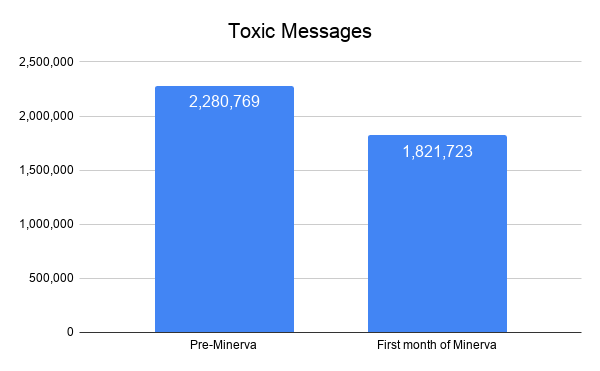

As a result, the number of toxic messages has decreased by 20% from August to September. 8% fewer unique players who send toxic messages were counted.

This is what FACEIT says: The developer of the artificial intelligence raves about Minerva. They believe this is just the beginning of the possibilities and based on this, Minerva can still be further developed and improved.

They state in their blog: “In the coming weeks, we will announce new systems to support Minerva in its training.”

It remains exciting around the artificial intelligence. If this continues, then Counter-Strike: Global Offensive could soon be significantly less toxic, as games like Rainbow Six Siege also wish.

Recently, a professional team was desperate with a bugged bomb:

Your opinion is important to us!

Do you like the article? Then let us know!