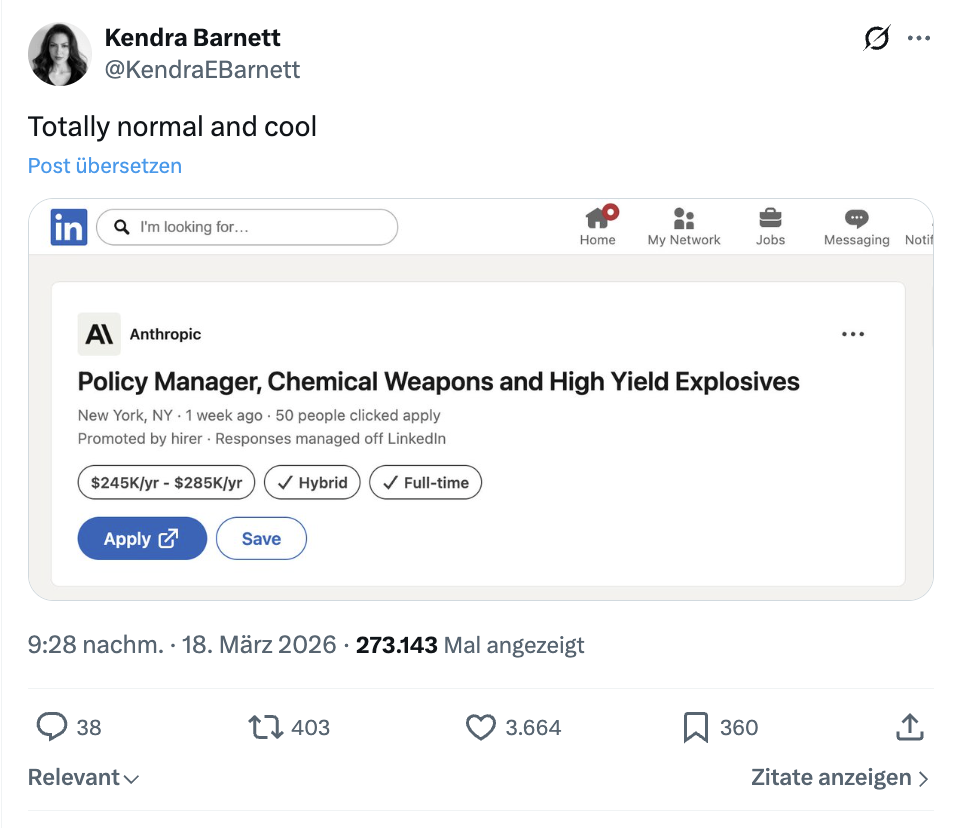

One of the largest AI companies has posted a special job vacancy: an expert in, among other things, weapons is sought. However, this is not about arming, but rather a growing concern about the misuse of the in-house AI.

What exactly has been advertised? The AI company Anthropic, known for its chatbot Claude, is looking for a “Policy Manager” for chemical weapons and high-explosives.

The job sounds somewhat unusual at first, however, the context is crucial: it is not about developing weapons, but about preventing AI from assisting with such tasks.

Further reports confirm that such experts are needed to build so-called “guardrails.” “Guardrails” refer to safeguards that provide a framework and block dangerous requests (Yahoo News & BBC).

According to information from the tech magazine Mashable, the sought-after person will help develop such rules and safeguards so that AI systems cannot be misused. The focus is on the manufacturing of weapons or explosives.

For the Protection of All

Why does an AI suddenly need weapons experts? The background is a growing problem: AI models are becoming increasingly powerful and thus potentially dangerous. Anthropic has recently tightened its policies. The use of AI for the development of:

- chemical weapons

- biological weapons

- nuclear or radiological weapons

- high-performance explosives

is now expressly prohibited (Verge & Anthropic).

At the same time, experts from various fields should now ensure that AI reliably recognizes when users attempt to generate dangerous content in practice.

How real is the danger posed by AI and weapons? Research results already show today that modern AI systems can provide understandable explanations of complex scientific processes – including those that could be misused. Large language models could theoretically help make knowledge about chemical or biological substances more accessible without safeguards (Cornell University).

Anthropic itself states that its models could be misused to enable “serious crimes” if protective mechanisms were to fail (Axios).

At the same time, there is currently intense discussion in the USA about how AI can be used in the military. While authorities and the Pentagon are increasingly relying on autonomous systems, Anthropic has positioned itself cautiously.

According to the news agency Reuters, there have even been tensions with the US government because the company views certain military applications – such as autonomous weapon systems – critically and does not want to support them unconditionally.

Dario Amodei, CEO of Anthropic, emphasizes in several interviews and statements above all one thing: AI must be strictly controlled, especially when it comes to military use or autonomous systems (CBS News via Reddit).

AI models can sometimes offer entirely new danger potentials that allow for misuse in many areas – including weapon possession. Despite all the dangers, widely accessible language models like ChatGPT and Claude also offer many advantages: A man sells his house, letting ChatGPT tell him how to paint the walls: “Expectations exceeded”