OpenAI proudly announced that GPT-5 has solved ancient math problems. But as it turns out, that was a misunderstanding. The AI was ultimately referring to existing research findings.

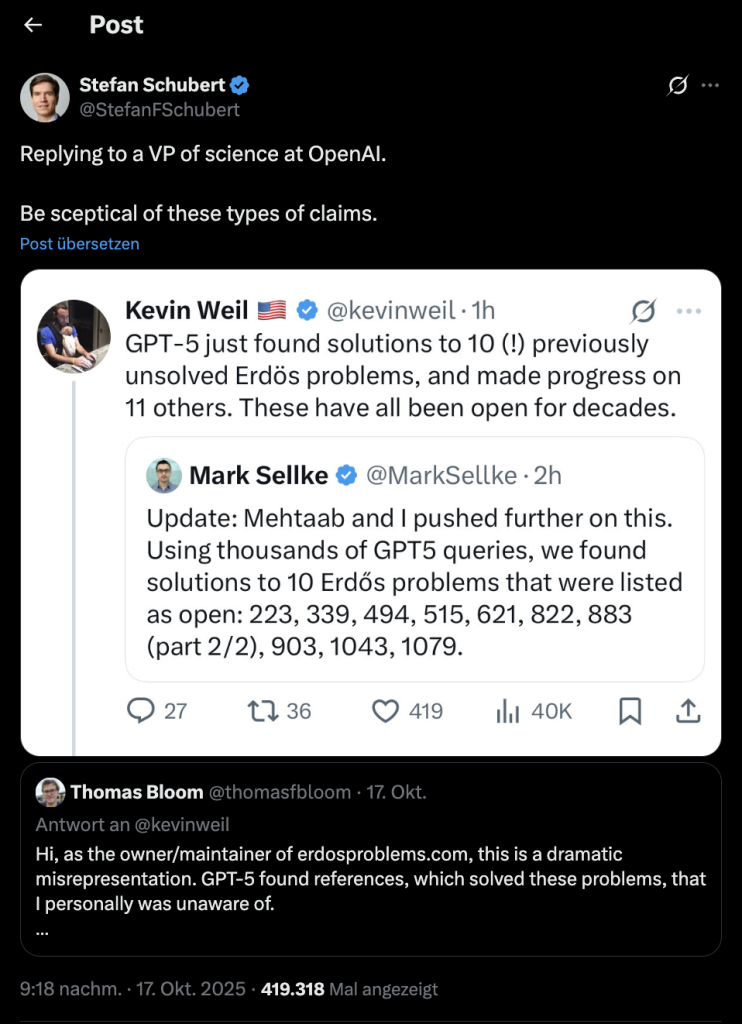

What was actually claimed? On October 17, 2025, Harvard University assistant professor of statistics Mark Sellke posted on X, creating the impression that GPT-5 had helped solve previously unsolved mathematical problems.

Subsequently, OpenAI product manager Kevin Weil claimed that GPT-5 “solved 10 open Paul Erdős problems” and made significant progress on another 11.

This statement was made in a now-deleted tweet, without a clear explanation of what exactly was meant or what evidence underpinned it (via Xataka). The only reference was Sellke’s original post.

What are these Erdős problems anyway? Paul Erdős was a legendary Hungarian mathematician known for his large number of publications and profound mathematical questions. Many of his problems, known as Erdős problems, are considered particularly complex and as yet unsolved.

They concern among other things:

- Prime numbers and their distribution

- Graph theory (e.g., networks or nodes)

- Combinatorics (the mathematics of counting and arranging)

On the platform erdosproblems.com, these problems are documented and linked to current mathematical papers.

Just copied?

What went wrong with OpenAI’s statement? OpenAI based its claim on the platform “Erdős Problems,” where researchers compile mathematical progress. Moreover, Kevin Weil simply misinterpreted the post of the assistant professor.

Mathematician Thomas Bloom, who operates the platform on which these open mathematical questions are collected, quickly clarified what it was really about. As he explained on X, OpenAI’s presentation was a “dramatic misinterpretation.” GPT-5 merely found current studies and research findings.

So the problems were not solved in the mathematical sense. Instead, the language model merely assigned or trivially expanded known papers – which OpenAI incorrectly interpreted as a “solution.”

GPT-5 simply reorganized existing information. Solving the problem independently exceeds, at this point, the capabilities of LLM (Large Language Models) (via Xataka).

The incident is somewhat emblematic of the state of the AI world in 2025: The pressure to deliver new breakthroughs is enormous. Companies like OpenAI, Google DeepMind, or Meta vie for attention and often with exaggerated promises.

But it’s not just large corporations that risk relying too much on artificial intelligence – even in everyday life, an AI can totally miss the mark. In another, somewhat absurd case, a user tried to correct a harmless error with Google’s AI Gemini – and triggered an unwanted endless loop: Google AI goes wild and insults itself 86 times

Your opinion is important to us!

Do you like the article? Then let us know!